| Feature name | Linux | FreeBSD | OpenBSD | TrustedBSD | NetBSD | Illumos |

| Native File System Support | Ext2/3/4 | UFS | UFS | UFS | UFS | UFS (read only) |

| XFS | ZFS | ZFS | ZFS | |||

| JFS | ||||||

| Btrfs | ||||||

| ZFS Support | Yes | Yes | No | Yes | No | Yes |

| Native ZFS | No | Yes | No | Yes | No | Yes |

| Boot Environments | No | Yes | No | Yes | No | Yes |

| Security frameworks | SELinux | Capsicum | Pledge | MAC | ||

| AppArmor | ||||||

| Compiler (native) | GCC | Clang | Clang | Clang | GCC | GCC |

| Other compiler | Clang | GCC | GCC | GCC | Clang | Clang |

| Scheduler | CFS | ULE | ULE | CSF | ||

| Event Notification SysCall | epoll | kqueue | kqueue | kqueue | kqueue | Event ports |

| Services Control | Systemd | BSD scripts | BSD scripts | BSD scripts | BSD scripts | SMF

RBAC and or PRIVILEGES |

| Firewall | IPTables | IPFW | PF | IPFW | NPF | IPF |

| PF | PF | IPF | ||||

| IPF | IPF | |||||

| Containers | chroot | chroot | chroot | chroot | chroot | chroot |

| Docker | Jails *1 | Jails *1 | Zones *2 | |||

| LXC | ||||||

| Container Security | Partial *3 | Yes | Partial | Yes | Partial | Yes |

| No | ||||||

| POSIX Compliance | Yes/No | Almost | Almost | Almost | Almost | Yes *4 |

| Probing | Partial | Full | Partial | Full | Full | Full |

| Dtrace *5 | Dtrace *5 | Dtrace*5 | DTrace | |||

| BPF *6 | BPF | BPF | BPF | |||

| UNIX tools | UNIX tools | UNIX tools | UNIX tools | UNIX tools | UNIX tools | |

| EBPF *7 | EBPF *7 | |||||

| High Availability | uCARP | CARP *8 | CARP | CARP *8 | CARP *8 | VRRP |

| Package availability | Distro dependent. | Latest | Latest | Latest | Latest | Distro depend. |

| Permisive License | No | Yes | Yes | Yes | Yes | Yes |

| Desktop Environments | All | All | All | All | All | Distro depend. |

| Ethernet over USB | RNDIS *9 | RNDIS *9 | RNDIS *9 | RNDIS *9 | RNDIS *9 | RNDIS *9 |

| chroot hasn’t been very safe but the implementation on OpenBSD is formidably strong and secure. | ||||||

| *1 FreeBSD Jails have been present since year 2000. | ||||||

| *2 Zones were heavily inspired by FreeBSD Jails, came out 2005. | ||||||

| *3 Available through security frameworks like SELinux or AppArmor | ||||||

| *4 A derivateive from OpenSolaris which was based in turn on Solaris it’s almost 100% compliant. | ||||||

| *5 Dtrace was originated in Solaris under the days of Sun Microsystems. | ||||||

| *6 BPF recently landed in Linux (under two years). | ||||||

| *7 eBPF also recently landed on FreeBSD inspired by the work from Linux. | ||||||

| *8 CARP was designed by OpenBSD. | ||||||

| *9 RNDIS is a proprietary protocol from Microsoft allowing ethernet/network connections through USB devices. | ||||||

Disclaimer: What you are about to read may contain inaccuracies. Feel free to discuss them somewhere else or reach me personally on my email. This is also my opinion and as such it may change through time, maybe tomorrow, next month, next year, next decade or never. I do also make very few reviews (if any) of what I write here so this article won’t be polished by any means and it is coming out of my mind and gut pretty raw.

The reader may also need to acknowledge Linux is not UNIX. In fact when combined with the GNU software beast anyone should remember GNU stands form ‘GNU is Not UNIX’. We may share ideas but we may implement them very differently, just because we are different individuals.

Introduction.

The writing of this piece comes from the annoyance I get from reading about the prominence of Linux (the kernel) in almost all the computing spaces. And since electronic devices are gaining relevance in our daily lives and society in general this question of prominence of not just Linux but ‘X’ gains importance too.

More specifically this writing comes after reading someone who has participated in relevant software which is in a gazillion people’s pocket. In a very unfortunate reply to the question: ‘What are the advantages Linux has over BSD now?’ the individual in question (which I’d like to preserve his identity) replied something close to (I do paraphrase): Linux receives much more investment from companies and therefore more paid developers are in it, plus BSD’s feature parity with that of Linux doesn’t hold.

If you find the articles in Adminbyaccident.com useful to you, please consider making a donation.

Use this link to get $200 credit at DigitalOcean and support Adminbyaccident.com costs.

Get $100 credit for free at Vultr using this link and support Adminbyaccident.com costs.

Mind Vultr supports FreeBSD on their VPS offer.

This is mainstream opinion. Linux is better than anything else and money is poured in constantly, more than in other platforms. And aside this is not true, this is not based in facts but on feelings. Most GNU/Linux distributions are very average on many aspects. The fact they run on many servers on this planet and many developers work on them, doesn’t make them better than ‘X’. They are popular but that’s it.

The individual in question did not, because he could not, point to relevant feature differences bettween the two operating systems.

Now go back to the top of this article and start checking features in a specific OS and start comparing, from that fastly written, from the top of my head, chart. Have fun doing that.

The truely irrelevant but meaningful bias.

It’s not amusing anymore reading about Linux VS anything. It is very sad to read very poor argumented discussions or monologues when there is a comparison between operating systems or even distributions, versions, whatever from a particular camp. For example I used to be a Windows hater (I still collect BSOD pictures) and as knowledge has come to me, I have started to appreciate the good technicalities of it. Not only knowledge has done that thought, the fact Microsoft finally released a decent OS with Windows 7 has also helped a lot. It only took almost thirty years.

In many comparison reviews between GNU/Linux distributions almost all the authors seem focus on the wrong and meaningless bits of the software. Comparing apt with yum is quite embarassing since it doesn’t have a point. Nor it has comparing implementations of the GNOME desktop environment, unless the focus of the discussion is the desktop.

The real problem comes in when comparing different operating systems. Oh boy!. The discussion easily falls into company ‘X’ uses product ‘A’, but hey company ‘Y’ uses product ‘B’, and I personally use this and I get this fantastic result when doing that, or this feature in this OS makes this greater than the other OS. Then we come to benchmarks and we have all read what Michael Larabel writes in Phoronix.com. It was ‘funny’ to read some benchmarks comparing FreeBSD with some GNU/Linux (Ubuntu or CentOS mainly) where FreeBSD results were awful. Sometimes you don’t realise you are comparing compilers and when one OS runs GCC and the other one Clang you may get irrelevant results. Nowadays though, Michael runs bechmarks activating GCC on FreeBSD so the results are relevant for once.

Enter the ‘Benchmark paradox’ from the brilliant Brendan Gregg. To summarize it, as he’s said so many times, ‘all benchmarks are wrong’. Not only because one may think ‘X’ is being measured when in reality ‘YZ’ is, but because any software will solve a specific narrow problem, and solving them all at once better and faster than anyone else is not possible (maybe interesting, desirable but not possible).

Let’s add some salt. Almost two years ago I was introduced to one person whos was working as the operations manager in a relevant bank in my country. We talked a bit of this and a bit of that and technology came out in the discussion too. For him Solaris was something old, from the distant past, a dog in performance, something not to use anymore. In the time of containers, copy on write file systems, delegated administration, performance oriented world, Solaris and to some extent other software that share codebase in relevant portions, seemed out of the equation for him.

A few years ago, Sun’s Solaris pioneered in those areas plus in system’s visibility with the insightful DTrace. But who is everyone betting on? On Linux. Which lacked those features for years and still lacks DTrace, Btrfs not corrupting data, or secure by default containers. Well, you can have those if you use Oracle Linux, which has DTrace, enable some crazy repository to support ZFS (you may leave Oracle’s support at the door when doing so), and install some Docker with it (just remember to use SELinux too). But yes, you can use alternatives, such as BPF on Linux, use ZFS on Ubuntu or CentOS for example, and again Docker with SELinux or AppArmor if that is your choice.

There are open source and free as in freedom alternative operating systems that have those features, are good performers, and have great communities. Those are FreeBSD (a true army knife) and Illumos derivatives such as OpenIndiana (desktop, development, hosting, etc) or SmartOS (for the big data center). In fact I wrote an article some time ago.

But why having alternatives they do get so ‘poor reputation’ and ‘marginal’ market share. Because humans love to win and they always bet to the winning horse. Betting otherswise is too risky, even if the reward may be is greater. The question now here is: who decides what are the relevant aspects to win this ‘race’. Maybe the ‘press’ might have something to do with this.

The relevant things to look at.

Compilers, file systems, Inter-Process Communication mechanisms (IPC), schedulers, are some of the relevant components of an operating system. As such they ought to be the pieces of discussion when comparing operating systems. Understanding those is difficult plus the combination of components can masquerade the good or bad effects of one part.

Listening to the talking-head of Bryan Cantrill (sorry Bryan, but your head is the only thing we could see in that ‘appearance’) in a BSD podcast talking about some of the hidden, barried miseries of Linux (the kernel) was enlightening for everyone.

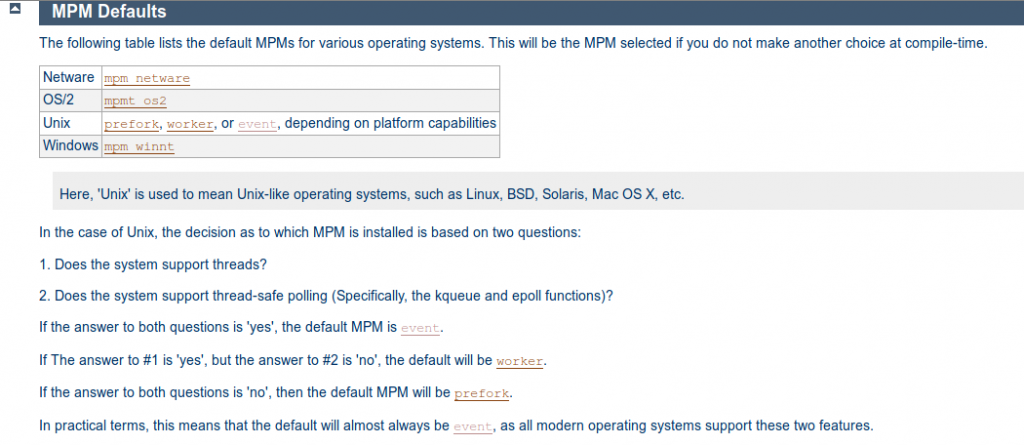

Discovering e-poll had been broken for ages and nothing was done was quite revealing. But what is e-poll at the end of the day? (I know it’s epoll, but why not e-poll?). Is it minutia? Well… just think of how the web is relevant nowadays. E-commerce sites, news sites, personal blogs, the Netflixes and HBOs of the world, and many more things stand and rely on the web. What has been for ages the leading web server? Apache HTTP. And what does Apache HTTP have to say about e-poll? The following ladies and gentleman:

And what does this capture mean? A lot my friends, a lot. A bit of a context first.

Apache HTTP needs to spawn a set of processes to work. The way the process creation and the way the program works can be controlled by using a different MPM module (MPM stands for Multi-Process Module). Prefork will bind one incoming connection to an Apache HTTP process to handle that request. Worker will act differently having a few already in line processes to handle incoming connections. In fact, using worker, one process can handle several connections, assigning one thread to each connection. Event goes a step beyond and can release connections from a thread and assign new work to it, like handling newer incoming connections.

This is important because a) threads, b) performance and c) the keep-alive problem.

Apache HTTP recommends using a threaded mpm (worker or event) on UNIX and Unix-like systems because of their benefits. However other people does not agree on fiddling with the difficulties of threads, such as PHP creator Rasmus Lerdorf. His language is powering an inmense amount of applications around the world and his views on the matter can be listened to in the first few minutes of this presentation. But other bits of this can be read here and here.

Anywhow, when we deal with PHP we all may be using PHP-FPM which will handle every request with one process assign to it, and then passed over to Apache HTTP vas a CGI program, a ‘Fast’ one seems to be. But we can also be using the prefork base configuration and off we go, using more memory but running our application in a ‘safer’ manner than when using worker or event.

HTTP is a stateless protocol. It doesn’t have many control mechanisms on what has been requested. Every request is a standalone one, so packets do hold the necessary information to make the HTTP request and for the server to understand them and act. But little is retained on the server end. How to enlarge this very short lived open window? Keep-Alive comes in:

‘The Keep-Alive extension to HTTP/1.0 and the persistent connection feature of HTTP/1.1 provide long-lived HTTP sessions which allow multiple requests to be sent over the same TCP connection.’

A second excerpt from the mpm event module explanation:

‘Keep Alive handling is the most basic improvement from the worker MPM. Once a worker thread finishes to flush the response to the client, it can offload the socket handling to the listener thread, that in turns will wait for any event from the OS, like “the socket is readable”. If any new request comes from the client, then the listener will forward it to the first worker thread available. Conversely, if the KeepAliveTimeout occurs then the socket will be closed by the listener. In this way the worker threads are not responsible for idle sockets and they can be re-used to serve other requests.’

All in all this is great, since we can extend the liveliness of a connection for a short period of time but a meaningful one to sustain and get some extra input from a user using the same connection, and we can handle several of those concurrently when using a threaded mpm like event. Great, isn’t it?

Who is in charge of organizing all this at the operating system level? On the Linux (the kernel) camp the one in charge is e-poll. On the BSDs, it is kqueue. Those two, as well as Event Ports in Illumos and Solaris, including I/O Ports from Windows solve the same problem. Assigning resources to input or output events. That simple, yet so complicated. Why complicated? Try do things concurrently. Try doing things concurrently at a great scale. What things? A client asks for a resource to a web server. Network socket brings in some data, which contains a resource reference for the web server. The web server handles that, looks for the data in the disk and brings it back, again through the network socket to the client. Who decides what process/es, or thread/s, will be assigned this set of actions? Multiply this for hundreds or thousand events per second and you get the idea about the problem.

As explained in the above paragraph epoll and kqueue are solving this issue but epoll has been broken for quite some time. To make it as simple as possible (maybe not as accurate as it is but bare with me) the issue is in behaviour. When an event happens and data comes in a file descriptor gets a thread assigned to it. Good. But what happens if more data comes it a bit later? Shall we give it to the same thread? Shall the data ‘wait’? If so, wait… what for? Or who to? Kqueue solves this by not consuming the new data, making it ‘wait’ until the original thread says so, because then it’s done with the prior work it was assigned to. That ‘waiting’ data is not associated with the incoming connection until the thread is queued for starts working on it again. Old data and the newer data from the same connection are handled correctly and by the same thread, and stays under control all the time. Epoll does not do this.

Epoll does what Solaris did (Bryan Cantrill vividly explains it) with ‘/dev/poll’ back in the late nineties, and the reason why they developed Event Ports shortly after. The problem is, again, assigning work to workers. If data arrives and a thread gets it assigned this is ok. But if new data comes in (keep-alive folks!) because the file descriptor is now closed and the thread is occupied a new thread gets data, so two threads get data (but different) from the same connection. How to organize this?

Is this all relevant? Well… I have no idea. All I know is there are many articles on the internet explaining how to get more of Apache’s HTTP performance using a multi-threaded MPM instead of the default and the thing managing it on Linux (epoll) has been broken for years (apparently solved on March 2016 with the 4.5 iteration of the kernel). And yes, I know, all those benchmarks we’ve all read and some have canonized, tell the performance increase of this and that, and all in between prior to that date when using threaded MPMs in Apache. Maybe Apache HTTP developers knew how to handle those issues, as Marek Majkowski brilliant explains in his blog entries on this topic. He explains how to handle this epoll issue by using the epollexclusive flag or using epolloneshot. But at the end of the day I have no clue. This could perfectly have been the ‘workaround’ Apache HTTP would have taken. So the issue went unnoticed.

Funnily enough, the epoll semantics is based on the BSD developed select (from the dark ages) and shares some of its flaws.

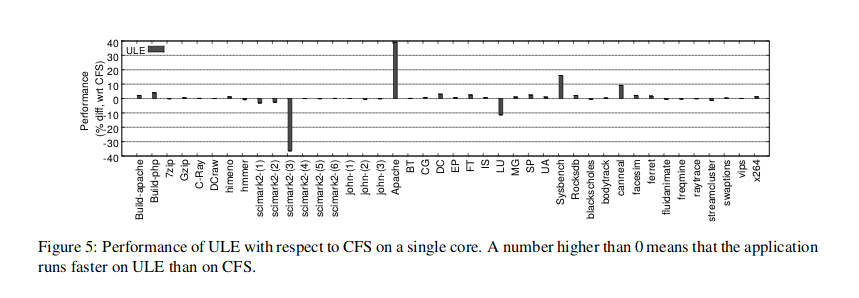

Let’s shift gears and move on to the scheduler. I remember reading, somewhere, the BSD scheduler was better than the Linux (kernel) one. And I also read the opposite somewhere else. Who is right? While working at a big three letter company (not IBM) I was dealing with security vulnerabilities a lot of the time. This gave me the opportunity and the time to read a lot of papers. One day I came across the following paper. A proper comparison of the Linux CFS echeduler with the FreeBSD ULE. How did they do this? Other parts of the system can influence the results, so they ported ULE to Linux so everthing was the same but the scheduler. Any results change could then be attributed to the scheduler. Obviously there is more to it so if this intrigues you, just read the paper. But hey, look at this:

I still can’t turn my head around this. First noticeable thing is there isn’t much difference between the two. But there is one benchmark where FreeBSD’s ULE goes south by a strong margin (36%). Scimark, a floating point benchmark. This said, there is a load where FreeBSD’s scheduler performs brilliantly, 40% better, and that is Apache. Ain’t it great? Ain’t it funny knowing the majority of web servers are on Linux based systems which its scheduler seems to perform worse than the one found in an open source, free as in freedom and in cost, known as FreeBSD’s ULE?

The last question is me just trolling you my dear the reader. Operating systems have more components that affect their performance. In fact I am pretty sure a very knowledgeable person like Brendan Gregg could tune any Linux box to perform as well or even outperform any BSD. And otherwise for other loads. Fine tunning systems is important. And yes, many companies just take the OS as it comes out of the box and make it do work without tinkering with it. But others do.

These two examples, the epoll broken semantics and the scheduler performance comparison, are the things that matter. And in some loads one OS can perform better than others, or similarly. Why similarly? Because concepts are similar, because the issues up front are almost the same for everyone, and because we are all the same kind of human.

The license aspect.

I will be short in here because long discussions and explanations have been given about licensing in software. I may not be totally accurate but I’ll try to briefly and simply describe the very basic attributes of the GPL, the 2-clause BSD and the CDDL.

GPL is a viral license. Basically, the code, the code you modify, the code you expand a program with, has to be shared if you make binaries publicly available. Share and share alike. You have to share in the same terms the code has been shared with you. Quid pro quo style.

The 2-clause BSD license is very, very short and very, very basic. Premise one is: use the code as you like. The second premise is: don’t sue the original coders. This allows companies to grab open source code, make modificiations and make proprietary software with it. Or share them back as open source, totally or partially.

The CDDL was inspired by the Mozilla Public License. To summarize the CDDL one may say it is the middle ground license between a permissive one such as the BSD or the MIT and the strong copyleft GPL. Files with code under the CDDL should be maintained available under the CDDL but there is no problem with combining them with proprietary ones.

Yes, very basic definitions. Go read them in depth if you want to understand better and digest everything.

What the companies are effectively doing.

In their jihad for profit at any cost, companies have literally hacked hijacked the GPL in a way no one could have dreamed of.

The GPL solved, as well as market shifts, the UNIX wars. The cost of a UNIX license back in the day was absurdly prohibitive. Code drift because of the different implementations available (AIX, HP-UX, SunOS, IRIX,…) also brought some incompabilities, or better said the fear of unforseable incompatibilities. And Sun’s Solaris was menacing everyone (at least that was the perception) to get the vast majority of the sales and the development of UNIX. During this time vendors were competing for the same market because they were selling operating systems, they were selling binaries. Proprietary binaries which its code was barried from the public light. Nowadays vendors are selling services in their Linux based operating systems, giving hte code away to anyone with eyeballs.

This is a very interesting perspective of OS development in terms of cost, specially for money avid companies. Developers coding certain parts of the system can be from one, two or three companies, and this code will benefit the rest of the community companies. In doing so, operating system development costs reduce dramatically, because one vendor doesn’t have to develop the whole stack. Instead the focus goes in the bits the vendor is interested in. The years of volunteers developing code in Linux for free have long passed away. You are not fooling anyone anymore but unexperienced youngsters and nostalgic hippies. That doesn’t mean there is a ton of well meaning, very talented people working for free on a Linux based project. But core Linux developers are often paid developers, and there are very good reasons for that. Remove that hardcore lot of people and the whole building collapses in a finger snap.

What companies are developing Linux? Which are the ones contributing and benefitting? If you click on the links you will get an idea.

With the blessing of IBM back in the nineties and the shift from selling binaries to selling services (which is really all about at certain levels) Linux has grown spectacularly. However is this the only way to make business? Is the Linux GPL, or any GPL, the only way to make business on open source? Of course not.

Open source and free software is also the base of businesses such as Netflix. The website you visit to watch your favourite series is well possibly a GNU/Linux powered one. But the heavy duty video stream is based on FreeBSD powered appliances. And more amazingly they are running the development branch of FreeBSD in production. More details on this pdf presentation. Or if you preffer you can watch a video presentation.

Other examples of this heavy lifting and great company success can be seen at WhatsApp, now wrongly owned by Facebook. Back in 2012 WhatsApp was pushing 2 million concurrent connections on one box using FreeBSD. It is uncertain if they are still using it or they have been progressively moving to GNU/Linux, since Facebook was using it, and looking for developers on the Linux camp to match FreeBSD’s performance in heavy network loads.

Those are two examples of companies selling services and providing those using vanilla FreeBSD. But there are other ways.

Apple has been using heavily modfied, but heavily based too, FreeBSD code. Darwin is the base open source operating system powering macOS. Not very usable, not coming in compiled form as it did in the past, no graphics, but open source and free to catch. As a reminder Apple was the most valuable company in the world in 2018, however Amazon is the number one in 2019. iOS is also based on Darwin, so yes, FreeBSD code is running on iPhones and iPads.

Other companies? This is a list of some companies using FreeBSD in their code base from a short to a long extent. If you work in the IT industry you may be aware of Juniper Networks equipment or BlueCoat’s, as well as NetApp where the code is used for clusters holding data for backup, availability and integrity.

On the Linux camp you will find Google with their Android devices, Microsoft having a huge amount of Linux VMs in Azure and so on and so forth.

It all boils down to, both operating systems are used by big successful companies, both have nice features, both do pretty well on most of the necessities of those businessess and hopefully both will continue to do so in the future. Stating Linux has more money on it and more developers and therefore is better with more features is far from the truth overall.

That said, the Linux foundation has much more money than the FreeBSD Foundation. More products are released using Linux (kernel) as a code base. That doesn’t mean the BSDs lacks technical features (go back to the chart please), knowledge or business opportunities to be successful. It means less people are participating. The future is not already set.

Yet an interesting debate, futile to some extent, but maybe useful at some point, is the one that talks about what the companies are giving back to the projects. It is obvious the return in Linux will be always greater than in the BSDs or CDDL based projects, because of their licensing aspects. Is that an issue? I don’t think so, since companies are giving back code to both entities and both type of companies allow jobs for developers to enhance their respective projects. I think this is the key aspect, jobs, and development, which both camps offer good opportunities. But yes, maybe Apple, for example, could contribute back with more code, like… porting launchd to FreeBSD and the rest of the BSD family.

Consequences for the future generations.

Insult comes in many shapes and forms. I once heard: ‘yeah… the Mac is great, it’s even got Linux inside’. I almost vanished. If anything the Mac is a UNIX. With a nice graphical, user friendly, interface in front of it. But that Linux – UNIX confusion is not the problem.

Universities around the world are teaching Linux (the GNU/Linux combo) right and left like if UNIX was something from the ugly past and it is not worth touching. Licensing is not given the necessary importance either. The future programmers need to know there is a ton of licenses to choose from and the GPL and the Linux thing are not the only ways of not just programming but being successful entrepreneurs.

The world will be very different in thirty years time. Computing and electronic devices are foreseen to populate our lives greatly. We need to give us and the ones coming after the tools and the mindset to expand the benefits of our work, to create new jobs, to create new tools, and to go beyond what we’ve already achieved. Oceans are still a mistery, the brain is another big black hole in our knowledge, space has to be the next frontier for a set of individuals, medicine, power, mining, the R (read recycling) industries, etc.

Are we going to give them only one giant mainstream mindset? The GPL/Linux one? Really?

Choice is the real freedom.

Richard Stallman pointed the right (right as in law) but failed with designing the tool. We need to leave aside for a moment the moral dimension of what Stallman stated, because it is clear of any doubt, because it is the morally correct choice, but also because it is not the way the world nor human beings behave.

Follow the money and you will get answers for many questions you may have. Yes, yes, ‘he had thought of it’ some may say. Bollocks. The moment I have the code from something I can build it and sell it. If you are making money from it I can sell it cheaper. And if I want to own the market it’s dead simple, I’ll leave it for free. Who can stand that? Only the ones with big pockets. The rest of us, the ‘innovators’, the ‘change breakers’, the ‘outsiders’ just have to wait to be bought.

The moment one believes forking is for free they are wrong. Forking is good but there is a cost. Technologically, on the market share and financially. I see the point Mr Stallman stated on those discontent users of one piece of software forking it or paying someone to do so. I only see founders of projects forking, not user base doing it. I do not even see regular users asking for forks, other than the systemd debacle. Which we all know how it has ended, with systemd on all major distributions, while others are building services on their own while still offering the major distros.

The dicotomy beween proprietary and open source code, or free software code if you might, is a false one. Open source has won the battle because of the very nature of the industry. Companies need common ground, and work force that can be incorporated in the labour market already knowing the product, of the tool set in the work place. This industry is complicated enough for that to happen. Close your doors and no one will know how to work with you, how to advance your product, etc. Without open source there is no road ahead.

Companies still act as imbeciles, jerks if you may, at any moment they’re given the opportunity. Why? They are governed by humans, just as me and you, and we are a bunch of inviduals that sometimes act as imbeciles and some other times make great things. The real question is, what does Sony have to do with the PlayStation code? Open it up? The moment they do this a competitor can catch the code, and sell the same product for a lesser price. Or they can make modifications and release a better product than Sony’s. What to do? Give the PS code away and just sell games? What about the games themselves?

Back in the day, patents were put in place for very good reasons. It is a partial solution to an unsolvable problem. This is why the GPL works so great. Everyone is profitting, investing just the necessary, while no one is being hurt from others developments. Who cares about market competition? It seems to have moved to the top of the structure, to sales, to marketing departments, to perception, away from the nitty and gritty technicalities. Is this good? Is this bad? I have no idea.

Conclusion.

I am not saying these things because I am very knowledgeable, quite the contrary. My great (in size) ignorance drives me to read, learn, fail, learn and repeat over and over again the same crazy process. In fact I have only been involved in IT for just two years. And if I were some of you I’d be scared of the fact someone with just two years of experience can see this awfully menacing cloud, politically speaking of course. A menacing cloud is also a sign of great opportunity. Linux (the kernel) is everyone’s favourite toy. I want it to succeed but for that to happen a lot of tension will have to be correctly driven. And that is a very tough job. A true alternative culture in software is needed.

Linux has been late to many things, such as containers, or copy on write file systems. Container-like tehcnologies were developed back in the year 2000 on FreeBSD Jails, and later extended to Zones in Solaris. Proper finished and secure by default container structures. ZFS is a great copy on write file system, again developed for Solaris in the year 2004. Btrfs came out later and has never catched up in features nor quality. SuSE is the only company defaulting to use Btrfs and Red Hat is still using XFS, while Ubuntu still relies on old fashioned Ext4. Boot environments are yet to be seen on Linux land, yet OS rollbacks are possible on FreeBSD or Illumos in a matter of seconds to minutes (depending on reboot time). The only way Linux deals with this is by using VM technologies and/or restoring from backups. Let’s not forget the desktop where proprietary software such as Windows or macOS (FreeBSD based) has been dominant, and probably paid for the whole party.

Linux could be seen leading in the mobile devices market but aside from the iPhone, and the watch and all the ‘i-things’, NetBSD runs on anything you can imagine. Thinking about building an IoT device? FreeBSD can also be seen running on ARM hardware, yet OpenBSD can run on several platforms too.

Discussion should steer from ‘drunk lad comments in a pub’ to a bit more technical and concise one. Again, my system is better than yours because this beer is so great doesn’t go anywhere. Asking why the scheduler does this instead of that is usually more helpful. People in a very knowledgeable position are hard to find and their articles are a bit scarce in some topics. ‘Go read the mailing list’ is just a defensive answer.

The real issue on FreeBSD’s ‘lesser perception’ is another ‘interesting’ question. People talk Linux (GNU/Linux here) because it is now easy to use and install a desktop form of it. People isn’t talking much about the BSDs nor Illumos derivatives because they are not easy to install as a desktop. Many developers using Windows just play with VMs, and since there are companies supporting Linux desktop that is what they install. The only thing the BSDs lack, and FreeBSD in particular, is a great distribution as Ubuntu is for Linux. GhostBSD is a great choice for that. OpenIndiana has a nice desktop too. This is the only feature the BSDs lack comparing them to Linux. The rest is there or even beyond in some cases. Now you know. And yes, the BSD folks may have so rush to have a decent, fast, easy to use desktop. Now, not tomorrow.

Money and business can be made using non-Linux software. The license is there (BSD license, MIT license, CDDL,…), the codebase is there (the BSDs as a whole, Illumos) and the practical cases are also there (Apple, Juniper, Netflix, NetApp,…). Maybe the guy who made me start this ranty article was right, not enough money is poured in the BSDs. But boy you can make a ton of it with these tools.

Update 11-10-2019.

Because of the quite significant number of visits this very article has had and the conversations it has sparked in some forums I’d like to make some clarifications.

First I’d like to thank the tone people disagreeing with me have taken.

Secondly, yes, I am biased, as anyone is. I preffer the BSD camp over the Linux one, but over the Windows and Illumos ones too. That doesn’t make those other systems bad, worse or they have negative impacts. I just preffer one over others. Isn’t that what we all do all the time?

The article is not inteded to be closed at a certain truth, it leaves the ‘end’ open. I just wanted to challenge some ideas like:

-

Linux is where the most innovation happens. To me this is not true. Linux is improving a lot, and has improved a lot over the last decade. But as I have pointed out above some relevant innovations are just arriving now into this computing realm, such as a stable copy-on-write file system as ZFS (released in 2004), a debugging framwork as powerful and complete as DTrace (even ported to Windows but not Linux, released in 2004 too), or the infectious (in a good sense) rise of containers, first seen in the form of FreeBSD Jails in the year 2000.

A ton of money bringing those features to Linux doesn’t mean Linux is innovating. Linux is taking the good parts of other systems and integrating them. Because Linux is the DMZ, the ‘neutral’ territory where big companies participate in OS development, this seems the ultimate land where innovation happens. Users, managers and in the end customers have been brought to a product lacking nowadays acclaimed ‘innovations’. The power of marketing is always overwhelming. Sorry, but someone had to say it.

-

The hijacking of the GPL by IBM (and subsidiaries), Oracle, Cisco and the like is so obvious that the money making question has to be brought up and people should think about the consequences of the words ‘open source’ and ‘free-libre software’. Money pays the rent, the mortgage and the food on the table. How does the GPL allow a small player to compete in today’s market? To me this is an open question with not so nice answers.Other companies opting for other paths in licensing or product base have succeeded and made lots of money, developing themselves and bringing the economy forward, not using the GPL, or at least in a much lesser extent.

-

Success can come in many shapes and forms. And this is a very important message at the university level. Many of the ones who read the article on november 4th did it from the US, at least a big 80% over the typical 50% of my readers. Congratulations if you have diversity and choice in the US because in many european universities all you get is Linux for breakfast, Linux for lunch and Linux for supper. Future generations will bring computation to every day life in an accelerating way. The licensing choice (or the code base of choice for a product-development) can be a legal blessing or an evil trap, not just for them but it may have a social impact too. Students need to know this and need to have alternatives tought to them. Even if they are needed to be discarded.

-

Brining up some Linux issues such as epoll did something to do with the ‘versus’ thing. Sure, a good part of it. But it also, at least in my head, has to do with what we look at. As I just said political issues matter and the point is if the policy allows you to move forward, correct issues and overall if it brings valid solutions for all. Of course the BSD land has technical issues, but some members of the GNU/Linux community don’t like theirs to be pointed out. I’m not sorry for telling everyone we are all flawed in some way.

Reading comprehension is something we all ought to improve, myself included. I want to point out a few things some may have overlooked and they may diminish my technical reputation but honesty goes first.

The URL begins with adminbyaccident. Isn’t that salty? Then it says ‘politics’ since that is the category I put this article in. The tittle contains a ‘versus’ which is always controversial. Not happy with these signs of ‘warning’ since they may not be clear to many I clarified all the text was my personal opinion, and as such it may change because it could be flawed by some reason. I also said I wrote the chart from the top of my head without proper research. Again, this is an opinion article not a research paper.

Don’t get brought into the trenches if someone has the ability to put a somewhat decent to read piece of text. Just take some distance and some salt and then come to your own conclusion, if that is achieveable for you. Simply put: don’t take this too seriously. Just think a bit about it.

Thanks to Guy M. Broome for pointing out a few corrections in the Illumos column such as the VRRP for high availability, and RBAC and privileges in regards of services execution control.

If you find the articles in Adminbyaccident.com useful to you, please consider making a donation.

Use this link to get $200 credit at DigitalOcean and support Adminbyaccident.com costs.

Get $100 credit for free at Vultr using this link and support Adminbyaccident.com costs.

Mind Vultr supports FreeBSD on their VPS offer.